Onto the third and final installment on the topic of data quality for King County GIS (KCGIS). Part 1 – data quality within the framework of GIS data set maintenance prioritization – was followed by Part 2 where we looked at the processes and tools for validating database and file-system objects within the Spatial Data Warehouse (SDW). In Part 3 we’ll wrap up looking at how we assess the quantitative completeness and qualitative content of metadata created for data we publish to the SDW.

Metadata, of course, is how data producers describe and advertise their data. It’s how we discuss what the data is, what it is not, and how (and how not to) use it. Metadata provides contact information on who to call if you have questions about the data, and provides explanations for all those pesky codes we store in our data tables. At King County we use the FGDC CSDSM ¹ for the structure and content guide for our metadata. Other standards exist, but we have found that irrespective of the content standard used by an organization, the key is consistency in application of the standard across the enterprise.

At King County, metadata quality begins at the ‘birth’ of a data set where a template (in xml format) is customized for each data set. The template completes standardized KCGIS information, such as disclaimers, and also structures the various contact information, based on an argument passed to the customized python script which generates the template. In addition, the script builds out the attribute entity section of the standard, prepopulating it with information queried from the data set. This ‘birthing’ step helps standardize the metadata across all SDW datasets, plus it gives the data steward a ‘jump start’ in completing new metadata.

This template is imported and upgraded into the data set using ArcCatalog tools. This aligns the metadata to the ArcGIS metadata structure so that the data steward can then complete various subjective sections, adding user value to the documentation. Guidance on how to complete these various metadata sections – such as the Abstract (Description) and Purpose (Summary), as well as often-neglected sections such as Data Quality reports and Lineage (Process Steps) – is provided in a metadata workbook and a more detailed, graphical help document which provides screenshot-by-screenshot guidance.

Quality control during metadata content entry is guided by inline help provided by ArcCatalog, as well as by color-coded messaging (x and ! hints) which flag incomplete sections and omitted tags. Stewards can also make use of tools, such as MP (metadata parser, found in the ArcToolbox) to report missing tags and inconsistent structure in the metadata. However, there are limitations in leveraging these options – e.g., the ! flags do not describe which specific error is being reported, and MP requires that you first export your ArcCatalog metadata to an xml file prior to running the tool. These limitations spurred KCGIS to develop a customized tool for performing quality assessment of our production metadata. The workflow surrounding this custom tool embeds other steps which make for a complete and automated metadata processing lifecycle.

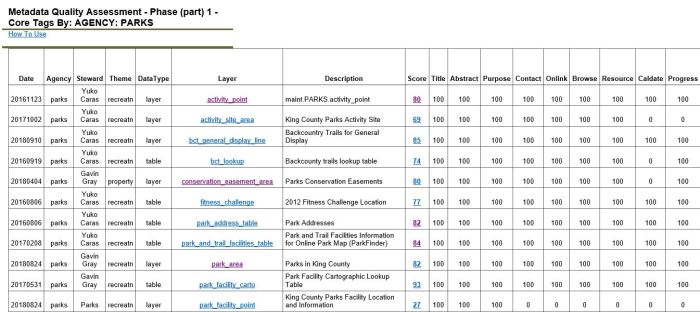

Results from customized Metadata Quality Assessment tool

Standardized filtered reports provide an overall ‘Quality Score’ and a breakdown by metadata section

Clicking on the Score provides a detailed summary of the missing tag information still required:

The main functions of the lifecycle workflow are:

- Exports the ArcGIS feature class/table metadata to a file-system xml document in FGDC format. This occurs when the data set is posted from staging to production;

- Creates htm versions of the metadata using the FGDC classic and the FGDC Frequently-Asked-Questions (FAQ) stylesheets;

- Publishes the xml and htm documents to a web server where the Spatial Data Catalog application sources the xml and dynamically displays a data set thumbnail image and key tags values, and hosts the classic/FAQ stylesheets;

- Evaluates the metadata compliance/completeness against the FGDC standard and KC best practices, and reports the ‘grade’ results to a summary page. Most useful is the detailed error logs which describes exactly which tag is amiss. This allows stewards to quickly navigate to the specific error location in the metadata;

- Extracts a range of tag values and builds current enterprise-wide data dictionaries for several key tag groups:

- Identification information (title, abstract, purpose, etc.)

- Thematic Keywords (i.e., searchable tags)

- Lineage Process Steps (i.e., the history of the data set)

- Attributes (field name, definition, source and domain type)

- Enumerated Domains (list of values, their decode value and count)

- Creates other statistical reports, such as the occurrence of attributes (fields) across the SDW (e.g., for a given attribute such as PIN, how many times does it occur and what are the other feature class/tables where it occurs).

Grade and data dictionary results are presented as both htm and csv (spreadsheet) summaries. The KCGIS Center is not above some gentle public shaming in order to encourage metadata compliance. The grade reports are presented in several filtered views, including By Steward and By Agency. Reviewers can see how well an agency or given data steward is performing across all the data sets for which they are responsible. And to foster a little friendly competition, a graphical monthly quality summary by Agency is also provided.

The Spatial Data Warehouse has hundreds of data sets contributed by multiple agencies across King County government. Managing the contents and the integrity of the data is a complex task supported by enterprise operations staff and the dedicated agency data stewards. Managing the quality and consistency of the database and file-system objects, the quality of data content in our data sets, and the completeness and quality of our data set metadata is an on-going task. As GIS data become more and more ubiquitous in applications, open data sites and web mapping platforms, there will be the need for ever more robust and efficient quality control and assessment best practices.

¹Federal Geographic Data Committee – Content Standard for Digital Spatial Metadata

Pingback: Quality King County Best Auto Insurance Prices Near You – rxsx

Folks – saluting your good work here! I would like to sing your praises in our Data Blog, Spatial Reserves.

LikeLike

Pingback: One local government’s progress in ensuring data quality | Spatial Reserves